Back to List

Terrain Relative Navigation

本研究では,着陸可能な平坦な地点へ探査機を自律的に誘導する手法を提案する.これまでにない自律性の高い手法を提案することで,超遠方天体への着陸実現へのブレイクスルーを目指す.

In this study, we propose a method for autonomously guiding a spacecraft to a flat spot where landing is possible. By proposing a method with unprecedented autonomy, we aim to achieve a breakthrough in landing on very distant celestial objects.

Abstract

近年,小天体探査が世界的に注目されている.これらのミッションでは,着陸やランデブーのために高精度の光学航法が重要である.そのため,公称地形情報と実際の地形情報とを比較することで偏差を推定する地形相対航法(TRN: Terrain Relative Navigation)が用いられることが多い.特に小天体探査では,目標物到着後に形状モデルを作成するための十分な観測が可能である.したがって,公称地形情報の生成には,目標体の形状モデルが利用される.

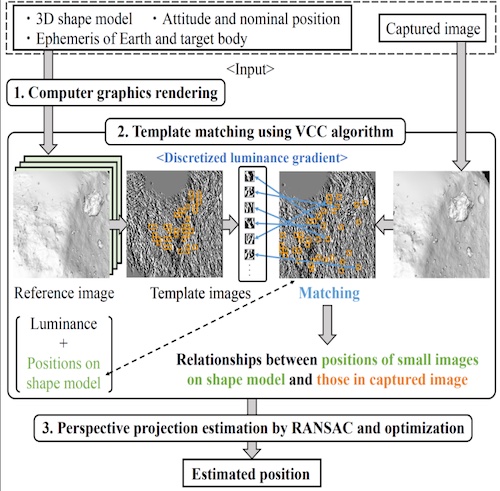

最近,我々はTRNを用いた自律光学航法について研究している.提案手法の場合,まず,レンダリングにより,指定位置からの参照画像を生成する.参照画像の各画素に対する形状モデル上の三次元位置も輝度とともに記憶する.次に,参照画像から抽出した複数の小画像をテンプレートマッチングにより撮影画像と比較する.このため,形状モデル上の3次元位置と撮影画像中の複数の小画像との関係を求めることができる.最後に,3次元形状を2次元平面に投影する透視投影を推定することで,宇宙機の実際の位置を求めることができる.これらの理由により,形状モデルを利用することで,宇宙機の3次元位置を高精度に直接推定することができる.提案手法を他の手法と比較することで,推定精度と計算時間を評価した.その結果,複数の画像解像度に対して高い推定精度を実時間で実現することができた.提案手法は,今後,より高精度に小天体への着陸を行うためのキーテクノロジーとなると考えられる.

The small body explorations have received attention around the world in recent years. In these missions, high-accuracy optical navigation is important for the landing or rendezvous. Therefore, Terrain Relative Navigation (TRN) to estimate deviations by comparing nominal terrain information with actual terrain information is often used. Enough observation of a target body to make a shape model is possible after arrival, especially in the small body explorations. Accordingly, the shape model of the target body is utilized for the generation of the nominal terrain information.

Recently, we are studying the autonomous optical navigation method based on TRN. Firstly, the reference image from a nominal position is generated by rendering in the case of the proposed method. Three-dimensional positions on the shape model relative to each pixel of the reference image are also memorized in addition to luminance. Secondly, multiple small images extracted from the reference image are compared with a captured image by template matching. Therefore, the relationships between the three-dimensional positions on the shape model and the multiple small images in the captured image can be determined. Finally, the actual position of the spacecraft can be determined by estimation of perspective projection, that projects a three-dimensional shape onto a two-dimensional plane. For these reasons, three-dimensional positions of the spacecraft can be estimated directly in high accuracy by utilizing the shape model. The estimation accuracy and computational time are evaluated by comparing the proposed method with other methods. As a result, the high estimation accuracy of several image resolution in real-time is achieved. We believe that the proposed method will be a key technology for landing on small bodies with higher accuracy in the future.